Introduction

In early 2023, we at the CyberPeace Institute responded to the global surge of interest in generative AI by examining our own AI usage. We recognized the need to ensure that our use of AI was responsible, human-centric, and aligned with our mission and organizational values. This realization began our journey to develop a targeted approach to responsible use of AI, which we documented and shared in October 2023.

AI disruption, AI ethics, and the development of our principles approach

Our journey started with extensively researching ethical and responsible AI development, deployment-related principles, and existing AI strategies from other organizations. However, recognizing the gap between theory and our unique operational needs, we shifted focus inward to understand how our teams have been using AI. This introspection and dialogue across departments resulted in developing our approach to AI using five principles to ensure AI supports, and not replaces, our human expertise, decision-making, and knowledge creation.

Despite this critical milestone, we knew this was only the first step in an ongoing journey. We needed more than high-level principles to guide us in our daily work. This realization carved out the next steps of our journey, which we document in this blog.

From Principles to Operational Guidelines

Operationalizing our AI principles involved three key steps:

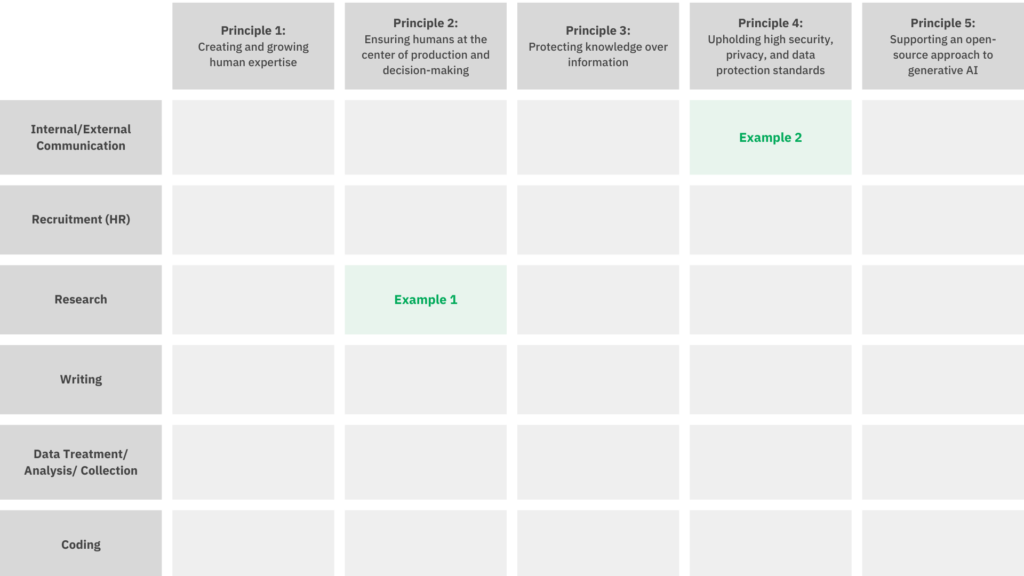

- We identified six core work streams across all the teams at the Institute (internal/external communication, recruitment/human resources, research, writing, data treatment, and coding) to guide the creation of our AI policy. Using our five principles as a foundation, we identified specific dos and don’ts for each work stream.

- We condensed these dos and don’ts into clear, concise, and operational policies for each of our five principles.

- We engaged colleagues and team leads, incorporating their feedback throughout the process.

How did this look in practice?

Identifying the dos and don’ts when using AI responsibly

In the first step, we crossed each of the six work streams with each of our five principles and identified dos and don’ts.

We ensured that all the dos and don’ts were firmly rooted in our principles and were clear and actionable for any Institute staff member. For example, see below the identified dos and don’ts for our research workstream under principle two, “Ensuring humans at the center of production and decision-making,” and for our communication workstream under principle four, “Upholding high security, privacy, and data protection standards”:

Example 1:

Principle 2, research workstream

- Do use AI for preliminary research, to provide an overview of a topic, and to help understand concepts and theories.

- Do use AI as a complementary research tool to other means of research (such as Google Scholar, libraries, publishing organizations, news media, or think tank organizations).

- Be aware that AI research tools might have biases and will not always provide a representative topic overview.

- Do not solely rely on AI to research a topic, especially if the topic involves factual or historical data.

- Do not trust everything AI says, especially generative AI and large language models, which are prone to hallucinations and have a data cut-off date after which no information was added to the models.

Example 2:

Principle 4, communication workstream

- Do make sure that any AI-supported communication and social media post adheres to the Institute’s social media policy.

- Do not include any credentials and Personal Identifying Information (PII), such as IP addresses or email addresses, in AI prompts.

This exercise revealed that certain obligations were present across different workstreams, such as exercising due diligence and critical thinking for any AI-generated output. This allowed us to create a condensed and targeted internal AI use policy.

An internal AI use policy revolving around the five principles

In a second step, this operationalization exercise allowed us to condense the identified rules for each workstream into a policy structured around our five principles. For example, under principle two, we established policies for colleagues using AI for their work based on the dos and don’ts identified in the workstream exercise above:

Ensuring humans are at the center of production and decision-making

Users must:

- Never blindly publish AI-generated content; always seek to validate or verify outputs using reliable sources.

- Ensure that AI-generated content is appropriately reviewed and approved by a human before it is published or used for decision-making.

- Ensure that AI tools are used consistently with the mission, values, and objectives of the CyberPeace Institute by regularly consulting with supervisors and colleagues to align AI-generated outputs with organizational goals.

- Exercise due diligence and critical thinking when using AI-generated outputs, as AI systems may produce biased, inaccurate, or inappropriate results.

Inclusive and cooperative process

Since the beginning of our journey, involving all colleagues, including management, in discussing and developing the principles and policy has been crucial to our organization-wide effort. This ensured that the final policy was shaped by all staff to fit our concrete work.

Operational Guidelines

The final internal AI use policy mirrors the principles approach while being more actionable and operational. Despite being put in place, this policy will undoubtedly remain a living document that allows us to adapt to the fast-paced technological environment we are in today. These guidelines enable the Institute to stand on solid ground—for the time being—and be confident in our ability to ensure the use of AI aligns with our mission and values. Most importantly, it allows us to look at the next steps on our journey to responsible use of AI.

Next steps on our journey

In preparation for presenting the internal AI policy to the entire staff, we conducted a small survey among staff members to assess their AI literacy and interests. This survey paved the way for our colleague’s first capacity-building session, where we presented practical AI use cases for our daily work. Understanding the dynamic nature of AI and its applications, we envisage a continuous internal learning approach. This approach will include awareness-raising sessions, open questions forums, and deep dives into AI-related topics (such as the role of AI in enhancing disinformation) to ensure our team remains at the forefront of AI knowledge. The aim is to equip our colleagues with the tools to use AI to improve their work and our collective pursuit of our mission to protect the most vulnerable in cyberspace—while doing so responsibly.

AI capacity building

Looking beyond the Institute, we are expanding our capacity-building efforts to include sessions on AI. We now offer training for NGO and foundation boards and management to assess their AI needs and develop their own AI strategy. For example, in March 2024, we held a session on Artificial Intelligence (AI) as part of the CyberSchool initiative. We are continuing to refine the AI capacity-building courses that we are excited to offer NGOs and foundations alike to help strengthen the digital resilience of the philanthropic and civil society sectors.

AI for Cyberpeace

Finally, our journey to responsible use of AI and our efforts to support NGOs and organizations in the philanthropic ecosystem seeking to follow a similar trajectory has grown into a larger programme: AI for Cyberpeace. In this programme, we continue our work to strengthen NGOs’ digital strategy—including AI strategies—and seek to leverage AI to secure NGOs’ digital operations and empower NGOs through AI-powered data analysis and cyber incident tracers. This programme will act as a framework to guide our AI-related work in the future. To further our impact, we plan to create an AI4CyberPeace global community, spanning philanthropies, civil society organizations, academia, and corporations, to provide knowledge to global AI policymaking and expose the malicious use of AI in the cyber kill chain. We strive to empower underrepresented communities in the AI regulation space and maintain a community of the capable and willing to advocate for AI to remain a force for good, not become a weapon.

Conclusion

This ongoing journey has been crucial for securing our mission and an invaluable learning experience. A solid foundation is essential for our continued work on AI, and our internal AI use policy lays a critical stone in this foundation.

Furthermore, we aim to inspire other organizations on a similar path towards responsible AI use, which requires a collective and collaborative effort. To this end, we’re committed to sharing knowledge and strategies with partners and stakeholders, fostering a sector-wide, human-centric approach to AI and emerging technologies. We achieve this as we always have by supporting and assisting others and protecting the most vulnerable in cyberspace. Moreover, transparency in the use and governance of AI is imperative, and we are dedicated to maintaining high transparency levels. This blog post, detailing the conception and content of our internal AI policy, underscored this commitment.

We look forward to hearing from you, our partners, stakeholders, and any interested readers. We appreciate your thoughts and feedback on our journey.

Connect with us and share your reflections at: [email protected]