Artificial intelligence (AI) has been the centre of attention for the past year. As everyone is starting to use the many newly available AI tools both at work and in their free time, individuals, companies, and organisations have started asking an important question: how can we make sure that we use AI responsibly? This question has been on our minds at the CyberPeace Institute and has led us to embark on a journey to answer this question and develop our approach to ensure responsible use of AI at the Institute. Here is how this journey unfolded.

The dawn of our AI journey

In November 2022 we all collectively witnessed the release of ChatGPT by OpenAI, captivating a global audience with its fascinating ability to generate human-like text. As we watched the world react, we too were engulfed in the hype around the use of AI, as our colleagues started to explore ChatGPT and other generative AI tools. Around the same time, we ventured into developing our machine learning (ML) drive data pipeline to enhance our investigative capabilities.

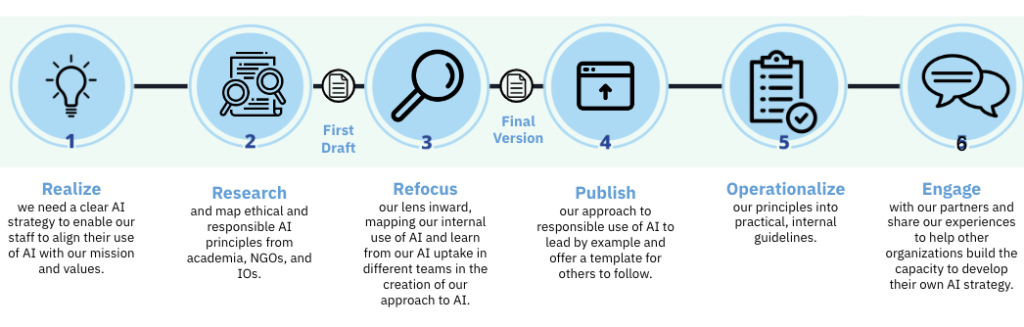

While AI models both new and old promise incredible opportunities, it became increasingly obvious that they also bring with them a range of ethical issues. Amidst the AI revolution happening inside and outside of the CyberPeace Institute, we realised we needed a clear AI approach to align our AI usage with our values and mission. This realisation was the starting point for our journey to develop a set of principles that would ensure that our use of AI is responsible; meaning that we minimise the risk of intentional and unintentional misuse that may result in harm to individuals, the organisation, our mission, partners, and beneficiaries.

Ultimately, this ongoing journey has been a valuable learning experience for us and one we are eager to share with both those currently undertaking this internal process as well as those who wish to start but might not know where to start.

Our journey to an approach to responsible use of AI

From theory to practice of ethical and responsible AI

At the start, we dedicated some time to extensively research and map out ethical and responsible AI principles elaborated by academia, civil society, International Organisations, and the private sector, and research how this new wave of general artificial intelligence will impact the cybersecurity landscape.

Although well-intended, this first step resulted in a general strategy, out of touch with our unique identity as an organisation, and too high-level in its scope. Most importantly, it couldn’t effectively guide the CyberPeace Institute staff in our operations and collaborations with partners, donors, and especially our beneficiaries.

It was a pivotal moment of realisation: even informed by the wealth of existing expertise on ethical and responsible use of AI, we understood the need to develop our own strategy starting from the operational reality and concreteness of our work. After all, our strategy would have to prepare us for the future while being grounded in our work: our strategy had to be both operational and aspirational at the same time.

Our inward exploration

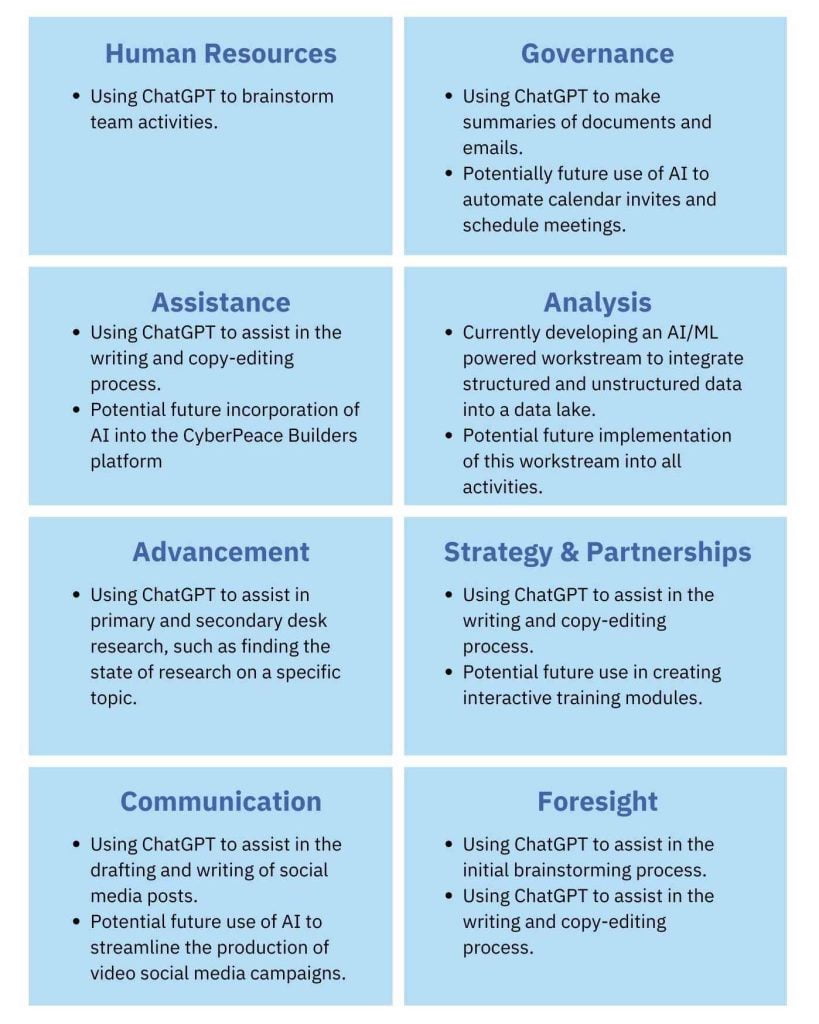

We focused our lens inward and looked at the uptake of AI tools in different teams at the heart of the Institute. Moreover, engaging dialogues across departments provided insights into the practical ways our colleagues use AI tools. Our distinctive operating model internally translates into a wide variety of capabilities, workflows, a high diversity of our staff – and a variety of ideas and visions of how AI can and could be used.

The conversations with colleagues led to a realisation: we were all using ChatGPT. The revolutionary trait of this technology also materialises in its immediate pervasiveness. We learned how teams have started using ChatGPT to improve and streamline their writing processes, we understood how we used ML models to facilitate the integration of unstructured data into our database, and we learned what our colleagues thought about AI—from excitement to worries. Finally, this tapestry of experiences and use cases helped us shape our strategy’s evolution.

Partial mapping of the current and potential future internal use of AI (work in progress)

From strategy to principles on AI use

To ensure that our use of AI aligns with our values and mission, we crafted our approach to AI deeply anchored in our day-to-day operations, our operating model, and our very own staff, within our constant commitment to cyberpeace. For this reason, we identified three key areas present in all of our work: how we create and grow expertise, how we generate knowledge, and how we make decisions. Then, we worked on the definition of the following operating principles;

First, we commit to creating and growing the human expertise needed to achieve cyberpeace. Therefore, we will never seek to blindly replace human-managed processes with AI, especially for entry-level jobs that could easily be carried out by AI.

Second, we commit to never using AI to autonomously produce knowledge or make decisions. We commit to human oversight in each step of our processes, and through this human-centric approach, we want to ensure accountability for our work and final products.

Third, we commit to safeguarding human-produced knowledge and supporting a higher quality knowledge environment across the internet by not diluting the concentrated quality information space with AI-generated content.

Fourth, we uphold our existing strong cybersecurity, privacy, and data protection standards.

Lastly, and fifth, we commit to supporting the open-source approach towards our AI projects. Open-source development enables a cross-disciplinary approach to research and experimentation, and it offers an effective way to ensure transparency and explainability in AI.

Our five principles support our three core workstreams.

This has been a collective journey and we drafted the principles with our colleagues in mind and actively sought their input and thoughts. We presented our approach to the whole team to gather feedback, which led to some final tweaks before we published our Approach to Responsible Use of AI in September 2023.

From principles to guidelines for responsible use of AI

Although we published our approach to AI, our AI journey does not end here. Alongside this journey and the establishment of our own principles for responsible AI use, we have started working on concrete, operationalised, internal guidelines on how our staff may, and may not, use AI. Besides, we also started increasing our internal AI literacy, and prioritise continued training our staff on how to use AI tools responsibly and building awareness of both the risks and opportunities of AI.

Lessons Learned

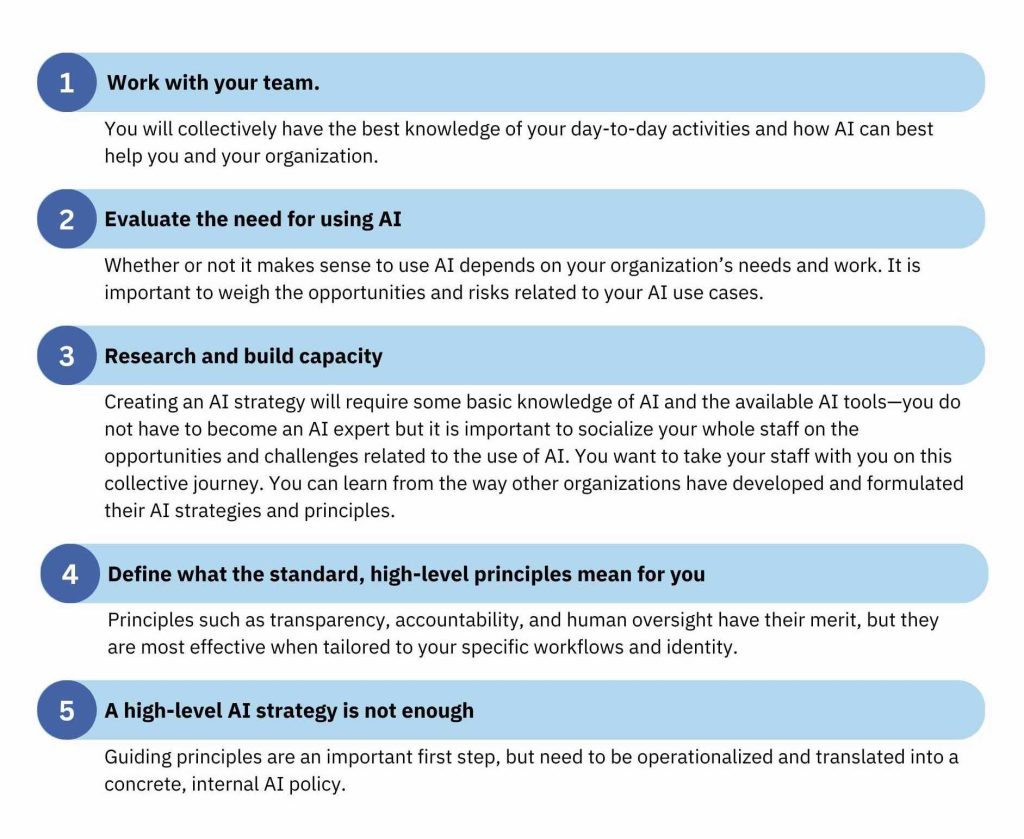

Through this journey, we’ve gathered valuable experiences and lessons learned that we wish to share with our partners and anyone interested in pursuing a similar goal.

Looking ahead at upcoming steps and goals on our AI journey

As we look ahead at how to operationalise our principles, we also look beyond our organizational confines, seeking to share knowledge and capabilities in collaboration with partners and stakeholders. Building on the experiences we garnered through our journey, we are working towards devising a methodology that enables other NGOs to create their own AI strategies and principles. Further, we will soon be offering training for boards and management teams of NGOs to guide them through the challenges of creating and implementing AI strategies internally.

Concluding this reflection, we recognise that our path toward responsible use of AI will be continuously in progress. It is a continual journey of learning, adapting, and striving to align emerging technologies with ethical, human-centric principles.

Your thoughts, queries, and feedback are invaluable in this continued journey. Together, let’s tread this path, ensuring that the digital realm is congruent with our collective pursuits of cyberpeace and security.

Connect with us and share your reflections at: [email protected]